Building Canada’s AI Infrastructure

Compute, energy, data, and ownership are becoming the foundations of the twenty-first-century economy. Canada is making a measured bet — the question is whether it will be enough.

Infrastructure rarely makes headlines. But in the AI era, chips, power grids, and data centres will shape Canada’s sovereignty, productivity, and long-term economic resilience.

Affordability Dominates. But the Terrain Has Shifted.

Recent polling suggests only about six percent of Canadians rank artificial intelligence among the country’s top three concerns.

That isn’t surprising.

Housing costs, grocery bills, healthcare access — these are immediate pressures. For most households, AI feels like a personal decision (“Should I use it?”) or a workplace anxiety (“Will it change my job?”). It is rarely discussed as national infrastructure.

But polling reflects what’s pressing right now at the kitchen table. It doesn’t measure the long-term changes that quietly redraw the map of opportunity.

And structural change rarely announces itself in advance.

For most of the twentieth century, electricity quietly powered the industrial economy. Few people thought about the grid when they flipped a switch. But factories, transportation networks, communications systems — entire cities — depended on it.

Artificial intelligence is beginning to occupy a similar role.

Not as an app.

Not as a novelty.

But as a layer of capacity beneath the economy.

When companies talk about “compute,” they are not using jargon for its own sake. They are referring to the physical power required to train and operate AI systems — specialized chips housed inside energy-intensive data centres.

That shift — from optional software to foundational capacity — is already underway globally.

The question is not whether Canadians feel urgency about it yet.

The question is whether Canada recognizes the terrain is moving beneath its feet.

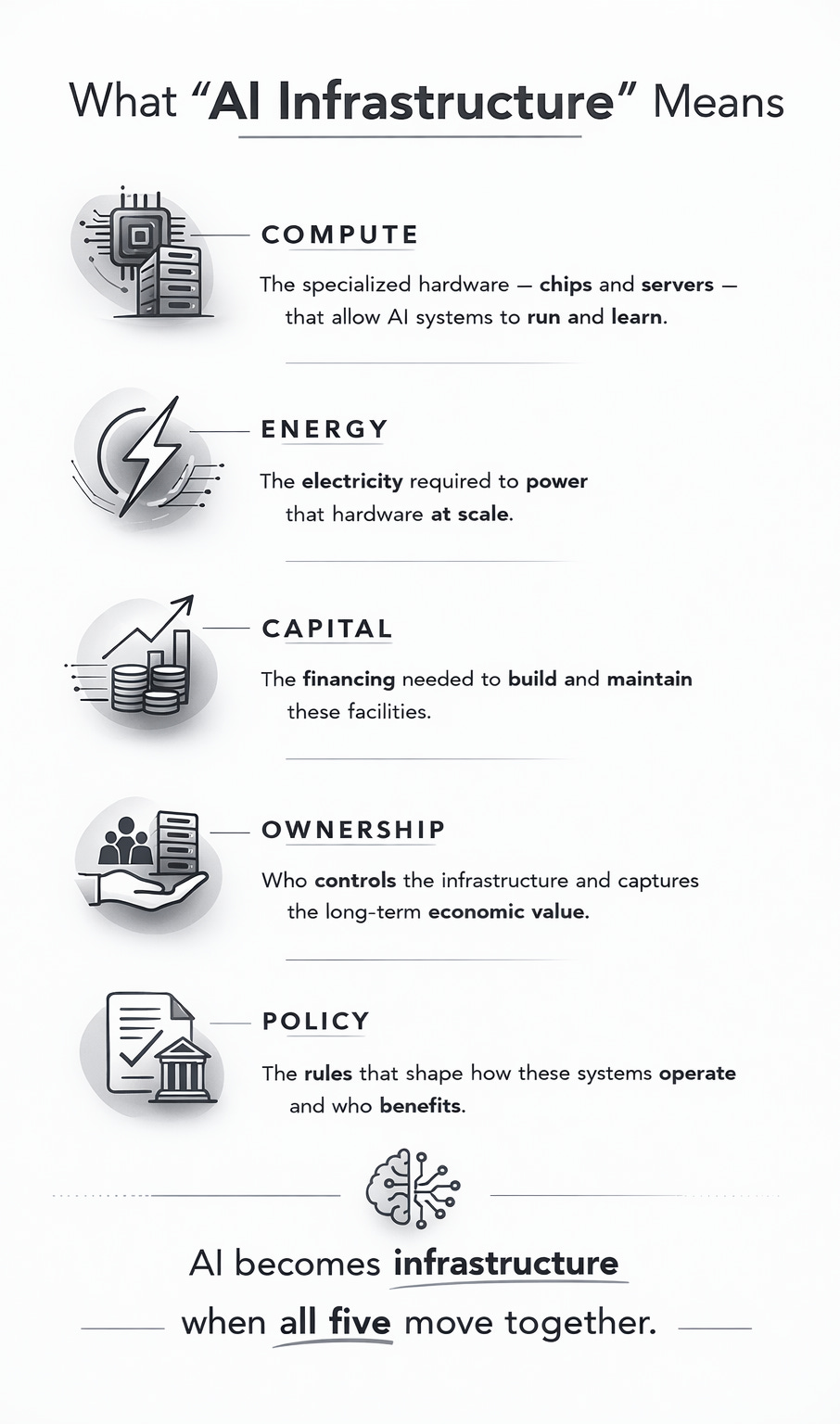

What “AI Infrastructure” Actually Means

If artificial intelligence is becoming infrastructure, what does that really mean?

It means physical capacity.

AI systems do not live in a weightless cloud. They run on specialized computer chips housed inside vast data centres — buildings filled with servers that draw enormous amounts of electricity.

When companies say they need more “compute,” they mean they need more of that physical power: more advanced chips, more servers, more energy to run them.

Without compute, AI does not scale.

Without scale, it does not transform industries.

One of the most important components in that stack is the GPU — short for “graphics processing unit.”

Originally designed to render video game graphics, GPUs turned out to be exceptionally good at performing the parallel mathematical calculations that modern AI requires. Today, advanced GPUs — many designed by U.S. firm NVIDIA — are the engines behind large language models and other generative AI systems.

When countries talk about securing AI capacity, they are, in practical terms, talking about securing access to those chips.

And that is where Canada’s story becomes more complicated.

From Research Strength to Industrial Build

Canada did not arrive late to artificial intelligence.

In fact, it helped shape the field.

The Pan-Canadian AI Strategy, first launched in 2017 and renewed in 2022, made Canada one of the earliest public investors in deep learning research. Institutes like MILA in Montreal, the Vector Institute in Toronto, and Amii in Edmonton trained researchers who now work across the global AI ecosystem.

Canada built intellectual capital.

But research strength and infrastructure strength are not the same thing.

Training advanced AI models at scale requires thousands of specialized chips running in parallel inside energy-intensive data centres. Over the past decade, that physical capacity concentrated largely in the United States and parts of Asia.

Canada trained the talent.

Others built much of the hardware.

In Budget 2024, Ottawa signalled that it understood this gap.

The federal government committed up to $2 billion toward a Canadian Sovereign AI Compute Strategy — an effort to build domestic computing capacity rather than relying entirely on foreign cloud providers.

By Canadian standards, this is a serious industrial investment.

By global standards, it is measured.

U.S. semiconductor and AI-related programs run into the tens of billions. Major American cloud firms invest similar sums annually in expanding data-centre capacity. China’s state-directed semiconductor strategy operates at even larger scale.

Canada is not trying to outspend global giants.

It is trying to ensure it is not entirely dependent on them.

The strategy aims to attract private capital into new or expanded Canadian data centres, build a domestically owned high-performance supercomputing system, expand public research capacity in the near term, and give smaller Canadian firms a foothold in compute capacity they could not afford to build alone.

This is not a bid to recreate Silicon Valley.

It is an attempt to build enough domestic capacity to participate from a position of greater strength.

Whether that is sufficient will depend on what comes next.

The Energy Equation: Advantage and Constraint

Artificial intelligence does not run on abstraction. It runs on electricity.

Training advanced AI models requires thousands of high-performance chips operating simultaneously inside specialized data centres. Those facilities consume enormous amounts of power — not only to run the hardware, but to cool it.

Energy is not a side consideration in the AI era. It is foundational.

Here, Canada holds a genuine structural advantage.

Quebec’s hydroelectric grid provides large-scale, relatively low-carbon power. Montreal has emerged as a data-centre hub in part because of that supply. Ontario brings nuclear baseload capacity and dense fibre networks. Alberta offers competitive industrial pricing and regulatory speed, though with a more carbon-intensive mix.

For AI firms, access to reliable, stable power matters as much as access to capital.

But advantage does not mean abundance without limit.

Hydro-Québec has projected sharply rising demand from data centres over the coming decade, and higher rates for large new industrial users are already under discussion. Grid planners across provinces are balancing AI expansion alongside electric vehicle adoption, housing growth, and broader electrification.

There is also a quieter tension.

AI infrastructure is energy intensive. Even when powered by cleaner sources, it requires transmission lines, substations, cooling systems, land, and water. Environmental trade-offs are real — from water use in cooling systems to strain on local grids near large builds.

The industry prefers cleaner grids where possible. Canada’s lower-carbon electricity mix is a competitive strength.

But there is no version of large-scale AI that is energy light.

Energy is leverage.

It is also constraint.

And in a world where compute demand is rising quickly, jurisdictions that can offer stable, scalable power will influence where infrastructure locates — and where long-term economic value follows.

The Compute Constraint

Even sovereign data centres depend on foreign silicon.

The advanced chips that power modern AI systems — particularly high-end GPUs — are designed primarily by U.S.-based firms such as NVIDIA and AMD. Manufacturing is concentrated in a small number of highly specialized facilities globally.

Canada does not produce frontier AI chips.

It can build the buildings.

It can supply the electricity.

It can fund access.

But the processors that perform the core AI calculations remain largely outside domestic control.

This matters because scale matters.

Training advanced AI models requires running thousands of GPUs in parallel for extended periods. Operating those systems at scale — serving millions of users — requires continued access to high-performance hardware.

Without sufficient chip access, a country’s AI capacity is constrained — regardless of its research strength.

And these chips are no longer treated as ordinary commercial goods.

Advanced semiconductors have become central to geopolitical competition, particularly between the United States and China. Export controls have tightened around the most advanced hardware, shaping where certain chips can be sold and deployed.

Canada, as a close U.S. ally and deeply integrated trade partner, is not targeted by those restrictions.

But it is also not the decision-maker.

If global supply tightens, if export priorities shift, or if domestic U.S. demand outpaces production, access to cutting-edge hardware will be influenced by choices made elsewhere.

This is not a crisis scenario.

It is a structural reality.

Sovereign compute reduces reliance on foreign cloud providers. It does not eliminate reliance on foreign chip supply.

Infrastructure is layered.

Ownership of data centres is one layer.

Control of advanced silicon is another.

Understanding that distinction clarifies both the ambition — and the limits — of Canada’s current strategy.

The Missing Middle

Imagine a small AI firm founded in Montreal.

Two researchers trained at MILA — Montreal’s institute for machine learning and deep neural network research — spin out a company building an AI tool to help hospitals analyze diagnostic imaging more efficiently.

Their expertise is strong. Early funding comes from Canadian venture capital. The founding team hires engineers locally. Within a few years, the company grows to 40 employees.

The early years are promising.

But then it needs to scale.

Training larger models requires sustained access to high-performance GPUs. Expanding into U.S. healthcare markets requires regulatory expertise and deeper capital. A Series C funding round approaches — the moment when a startup either becomes a global contender or stalls.

At that stage, comparisons sharpen.

American investors can often offer larger pools of capital and deeper procurement networks. Compensation structures — particularly stock options and equity treatment — may be more attractive to senior talent. U.S. capital markets are broader, and scaling pathways more mature.

Tax structure is not the only factor in these decisions, but it is part of the equation. Capital cost, equity treatment, regulatory friction, and market access all shape where firms anchor long-term growth. The United States has deliberately structured elements of its system to accelerate expansion in strategic sectors.

That does not mean Canada should mirror every incentive. Tax policy serves broader public goals beyond any one industry. But when scaling firms compare jurisdictions, the full operating environment inevitably enters the calculation.

And so a familiar pattern often emerges.

The headquarters shifts south.

The R&D team remains in Montreal.

The servers may still operate in North America — but ownership consolidates elsewhere.

This is not villainy.

It is economics.

Canada has historically been strong at early-stage innovation. It has been weaker at anchoring global scale. The result is often a hybrid model: talent and research remain here, but equity appreciation and strategic control migrate outward.

Over time, that matters.

When scaling firms relocate, executive functions move. Board seats move. Long-term capital accumulation moves. Local economies retain employment, but they lose ownership.

This is the “missing middle” problem — the difficult transition from promising startup to globally competitive anchor company.

Infrastructure alone does not solve it.

But without domestic compute capacity, energy leverage, and policy clarity, the gravitational pull toward larger capital markets becomes stronger.

The question is not whether Canada can produce AI talent.

It can.

The question is whether it can convert that talent into sustained domestic ownership at scale.

That remains unresolved.

The Industry Stress Test

Now imagine that same Montreal firm preparing to build or lease larger compute capacity in Canada.

Securing access to GPUs is one step. Securing space inside a data centre connected to sufficient grid capacity is another. Industrial projects require permits, environmental assessments, transmission upgrades, and regulatory approvals. If those processes take significantly longer than in competing jurisdictions, capital looks elsewhere.

Speed is not cosmetic.

It shapes where investment lands.

At the same time, the company’s board is weighing long-term capital structure. Large financing rounds in the United States may come with deeper venture pools and clearer exit pathways. Stock option treatment, capital gains rules, and liquidity expectations all factor into recruitment and retention decisions.

Canada does not need to copy every incentive designed elsewhere. But firms compare entire operating environments, not single line items.

Then there is demand.

Public procurement policies and industry adoption quietly determine whether domestic firms have a stable launch market. If hospitals, governments, manufacturers, or financial institutions default to established foreign platforms, domestic AI firms face a paradox: build at home, but sell abroad.

Infrastructure without adoption risks becoming stranded capacity.

Adoption without domestic infrastructure deepens dependency.

The Montreal firm’s decision is not about patriotism or preference. It is about whether the surrounding ecosystem — speed, capital, and demand — makes scaling here viable.

That is the real test of any compute strategy.

Where Data Lives Matters

The Montreal firm’s servers have to sit somewhere.

So do the systems used by hospitals, banks, manufacturers, and government agencies across the country.

When we talk about AI infrastructure, we are not only talking about powerful chips performing calculations. We are also talking about the physical servers that store data — sometimes for years — inside the same data centres that house those chips.

The “cloud” is not abstract. It is a network of buildings filled with hardware, connected to power grids and fibre lines, located in specific jurisdictions.

When Canadian hospitals use AI-assisted imaging tools, when banks run fraud-detection systems, or when public agencies analyze large datasets, those systems both process and store sensitive information — medical records, financial transactions, personal identifiers, operational analytics.

Where that hardware sits determines where that data sits.

And where data sits determines which laws apply, who governs access, and where technical expertise accumulates over time.

If infrastructure and storage capacity are domestic, Canadian law governs access. Technical skill develops locally. Backup systems are integrated into our own grid.

If infrastructure and storage are external, those advantages accrue elsewhere.

It is about recognizing that operational control follows physical infrastructure — including the long-term stewardship of Canadian data.

In the twentieth century, owning rail lines and power grids shaped economic independence.

In the twenty-first, the question increasingly includes where digital information is processed, stored, and governed.

Hardware is not separate from data.

It is where data lives.

Connectivity Is Part of the Stack

Compute and storage do not operate in isolation.

They depend on connective tissue — fibre backbones, routing infrastructure, and increasingly low-earth-orbit satellite networks that extend service into remote regions.

Consider a northern community hospital relying on satellite connectivity to transmit diagnostic imaging to specialists in southern Canada. AI-assisted tools may help analyze those scans. But the system’s reliability depends not only on compute capacity and electricity, but on the networks carrying that data.

Canada’s geography makes this layer especially relevant.

Low-earth-orbit satellite systems have expanded connectivity in rural and northern communities where terrestrial infrastructure is limited. That expansion improves access. But when those systems are owned and operated beyond Canadian jurisdiction, another layer of dependency enters the stack.

Connectivity is not separate from sovereignty.

It is part of the same architecture.

Compute.

Energy.

Capital.

Ownership.

Connectivity.

Weakness in any one layer shapes the resilience of the whole.

The Time Horizon Problem

Infrastructure rewards patience.

Political cycles reward speed.

Data centres take years to plan and build. Grid upgrades span electoral cycles. Capital markets move faster than public processes. Regulatory frameworks evolve even more slowly.

Canada has often defaulted to integration rather than ownership — participation rather than anchoring control. That approach has delivered stability and access. It has not always delivered leverage.

The AI infrastructure build now underway is an attempt — modest by global standards, but deliberate — to adjust that pattern.

Whether it succeeds will depend less on announcements and more on alignment:

Hardware access.

Energy capacity.

Capital formation.

Adoption.

Policy clarity.

Industrial strategy is not a headline.

It is cumulative discipline.

Quietly Urgent

In the twentieth century, electricity grids powered factories. Rail and highway networks connected markets. Telecommunications shrank distance. Financial systems enabled capital to move at scale. Each reshaped the economic terrain of the country.

Artificial intelligence is not simply another sector layered onto that terrain.

It is becoming part of the terrain itself.

Compute capacity will influence healthcare systems, manufacturing output, energy management, logistics, public administration, defence planning, and capital markets — simultaneously. Few technologies in modern history have cut across so many domains at once.

If AI infrastructure becomes as foundational to this century as electricity, transport, and telecommunications were to the last, then public attention that treats it as peripheral is not proportionate to the stakes.

This is not about enthusiasm for new tools.

It is about who builds, owns, and governs the systems that will shape productivity, public safety capacity, and national leverage for decades.

Infrastructure does not poll well.

But it defines the map.

The question is whether Canada shapes any of it.

A polished PDF edition of Building Canada’s AI Infrastructure is now available to paid subscribers on the Subscriber Resources page, listed in alphabetical order.

💬 If this gave you something to think about, tap the 💚 or drop a comment below.

If this kind of steady, systems-level thinking matters to you, consider supporting the work through a paid subscription to Between the Lines.

Paid readers receive access to downloadable PDF briefings and an evolving archive of long-form research on Canada’s economic and digital future.

If a full subscription isn’t right for you, you can also support the work with a one-time contribution:

☕ https://buymeacoffee.com/lenispot

Every contribution helps sustain independent Canadian policy analysis.

You Might Like

This article is published on Substack for conversation and sharing. The canonical home for this work is Between-the-Lines (between-the-lines.ca).

What an excellent article, and for a technologically inept person, I have gained some insight. I believe two things must occur. The first is the establishment of Canadian sovereign cloud systems and AI.

The concern I have always had is that there is much innovation and invention in Canada regarding myriad defence and technological excellence. These SME’s gain significant traction then are head hunted by foreign, particularly American firms. We have to severely reduce this process through government restrictions, tax incentives for research - whatever works.

I have heard of cases where Canadian companies develop leading edge tech subject to Canadian IP laws, then they get sold to the US. Then when our companies in this predicament improve or develop further innovation, then the company would face US IP laws approval to sell these products to foreign customers. That to me is a lose/lose situation.

Quantum computers are starting to be used for AI applications as complementary solutions (instead of relying solely on AI data centres based on NVIDIA chips). DWave is a Canadian company (based in Burnaby BC) that is producing quantum computers and has been doing so for years now.

AI response on a Brave engine search of "dwave and ai" : "D-Wave Quantum is actively advancing the integration of quantum computing with artificial intelligence (AI) and machine learning (ML), focusing on solving complex optimization and generative AI tasks. The company has introduced a new open-source quantum AI toolkit that enables seamless integration between D-Wave’s quantum processors and PyTorch, a leading ML framework. This toolkit allows developers to build and train Restricted Boltzmann Machines (RBMs)—used for generative AI tasks like image recognition and drug discovery—by leveraging quantum computing to accelerate training on large, complex datasets.

DWave’s annealing quantum computing approach is particularly well-suited for optimization problems central to AI, such as feature selection, model training, and hyperparameter tuning. The company has demonstrated real-world applications through collaborations with organizations like Japan Tobacco Inc., where quantum-assisted AI improved drug discovery workflows, and TRIUMF, where quantum simulations of particle interactions showed significant speedups. Additionally, D-Wave’s Leap Quantum LaunchPad™ program and on-premises Advantage™ systems enable organizations to experiment with hybrid quantum-classical AI solutions.

In defense and aerospace, D-Wave partners with Davidson Technologies to develop AI-powered applications for national security, including supply chain optimization and logistics management, with real-time quantum processing enabling faster decision-making. D-Wave continues to expand its quantum AI capabilities through new tools, partnerships, and a focus on hybrid computing, positioning itself at the forefront of practical quantum-AI innovation."

Where DWave becomes complementary to AI data centres, specifically (search on the terms "dwave quantum computer efficiency compared to ai data centres") : "D-Wave's quantum computers are designed for energy efficiency and specialized optimization tasks, offering a distinct advantage over traditional AI data centers in specific workloads.

Energy Efficiency: ’s Advantage2 quantum computer operates on just 12.5 kilowatts of electricity, the same power footprint as its earlier systems, despite a significant increase in performance. This stability in energy use, even with 4,400 qubits, makes it highly energy-efficient compared to large-scale AI data centers that consume megawatts of power for training massive models.

Optimization Over General AI Workloads: While AI data centers rely on GPUs and TPUs to train and run large machine learning models, D-Wave’s annealing quantum computers excel at solving complex optimization problems—such as logistics, scheduling, and materials science—faster and with less energy than classical methods. For example, researchers at TRIUMF and Japan Tobacco have used D-Wave systems to accelerate drug discovery and generate high-quality samples for AI training with lower energy consumption.

Hybrid Quantum-Classical Workflows: D-Wave integrates quantum processing with classical computing through its Leap cloud platform, enabling hybrid solvers that handle problems too large for quantum systems alone. This allows businesses to leverage quantum speedups for parts of AI workflows—such as pre-training optimization—while relying on classical infrastructure for the rest, improving overall efficiency.

Quantum Advantage in Specific Tasks: D-Wave claims its systems have achieved "beyond-classical computation" on real-world problems, such as simulating quantum dynamics in magnetic materials and optimizing high-energy particle interactions. These tasks, which would take classical supercomputers centuries, are completed in minutes with significantly lower energy use.

Limitations: D-Wave is not a general-purpose computer. It does not replace AI data centers for broad AI training but instead complements them by solving specific, high-value problems faster and more efficiently. Critics remain skeptical about claims of "quantum supremacy," but real-world applications in industry and research continue to grow.

In summary, D-Wave’s quantum systems are not direct replacements for AI data centers but offer a highly efficient, specialized alternative for optimization and simulation tasks, potentially reducing time and energy costs in AI-driven research and industrial applications."

And DWave is already partnering with several organizations in advancing AI ... "D-Wave is partnering with several organizations to advance AI applications using quantum computing, including:

Zapata AI: A multi-year strategic partnership focused on developing commercial applications that combine generative AI and quantum computing, particularly for accelerating new molecule discovery and solving complex optimization problems. This collaboration leverages ’s Advantage™ annealing quantum systems and Zapata’s Orquestra® platform.

Comcast: Collaborating with D-Wave and Classiq to launch a quantum lab testing quantum computing for next-generation network management, including traffic optimization and predictive issue resolution, to address rising data demands from AI and streaming.

Japan Tobacco Inc. (JT): Completed a joint proof-of-concept using D-Wave’s quantum technology and AI in drug discovery, where the quantum approach outperformed classical methods in AI model training.

Davidson Technologies: Partnering on quantum-powered AI research, including national defense applications, with D-Wave’s Advantage2 system hosted at Davidson’s Huntsville facility.

Unissant: A co-marketing and co-selling partnership to help federal agencies adopt hybrid quantum-classical technologies for AI-driven use cases like fraud detection, anomaly identification, and workforce modeling.

Southeastern Quantum Collaborative (2026): Joined as an inaugural member, partnering with IBM, Alabama A&M University, and regional universities to advance quantum information science and workforce development, embedding D-Wave’s technology in defense and industrial training programs."

Hopefully we can also develop a Canadian alternative to NVIDIA.